|

Note: the following article recently appeared in Catalyst, the official publication of the International Association of Business Communicators (IABC). I volunteer for both IABC DC Metro and IABCLA, and I wanted to share the content. By: Adam Fuss, SCMP, MITI

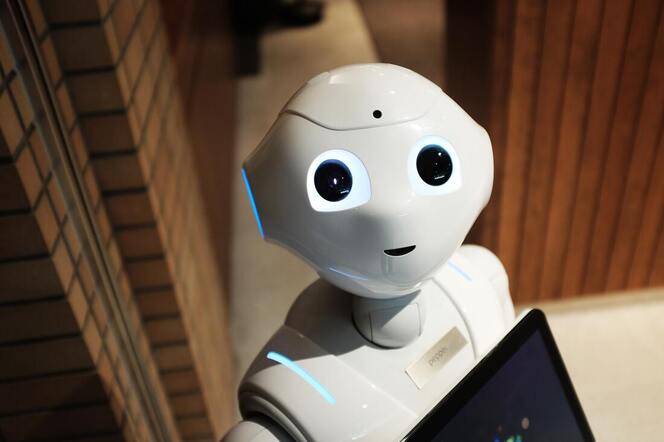

On 25 January, Shel Holtz’s Catalyst article, “Generative Artificial Intelligence for Communicators” served as a wake-up call of sorts. Widely respected in the IABC community and broader communication profession, Shel outlined compelling and ethically sound use cases for how generative artificial intelligence (AI) technology can fit within the professional communicator’s toolkit. Generative AI is all the rage now, and for good reason given its potential to transform content creation and other areas of life. Although not perfect by any stretch — no technology is — generative AI is here to stay and is almost certain to improve rapidly. Rather than debate whether it should play a role in our work, as professional communicators we would be far better served by debating how we should use it.

0 Comments

Your comment will be posted after it is approved.

Leave a Reply. |

AuthorI'm Eli Natinsky and I'm a communication specialist. This blog explores my work and professional interests. I also delve into other topics, including media, marketing, pop culture, and technology. Archives

July 2024

Categories |

RSS Feed

RSS Feed